I was an enthusiast of Bitcoin from the earlier days of around $1,000. The philosophy of decentralization, the end of centrally controlled fiat currency, a robust and tamper-free, anonymous block chain – They all seemed like a utopian and revolutionary future. I was instantly fascinated.

Over the years, Bitcoin as a payment method has been more and more widely adopted in merchants around the world. The number of nodes and the number of transactions increased explosively. I felt like the future was coming.

But, as time went on, my enthusiasm in Bitcoin becoming a replacement for fiat currency has slowly faded away. Bitcoin still has its value, but its value is most likely as an asset or commodity, and far less as a currency. The following are my concerns and disappointments I have when I imagined Bitcoin to become the currency of the future.

Currency or Asset?

The first concern was the BTC-USD value shooting up like a rocket. This compromises it’s usefulness as a practical means of payment.

“Who would use Bitcoin to pay for something, when the value of Bitcoin can increase 10% the next day?”

As the value of a currency increases dramatically, the less likely one becomes to let go of that currency. Imagine a country where you had the choice to pay with either US Dollars or Japanese Yen. If the value of 1 USD was increasing %10 MoM against JPY, who would actually use USD?

This shift of the perception of Bitcoin turning from a currency into a speculative asset is where one of my doubts are.

Transaction Inefficiency and Scale

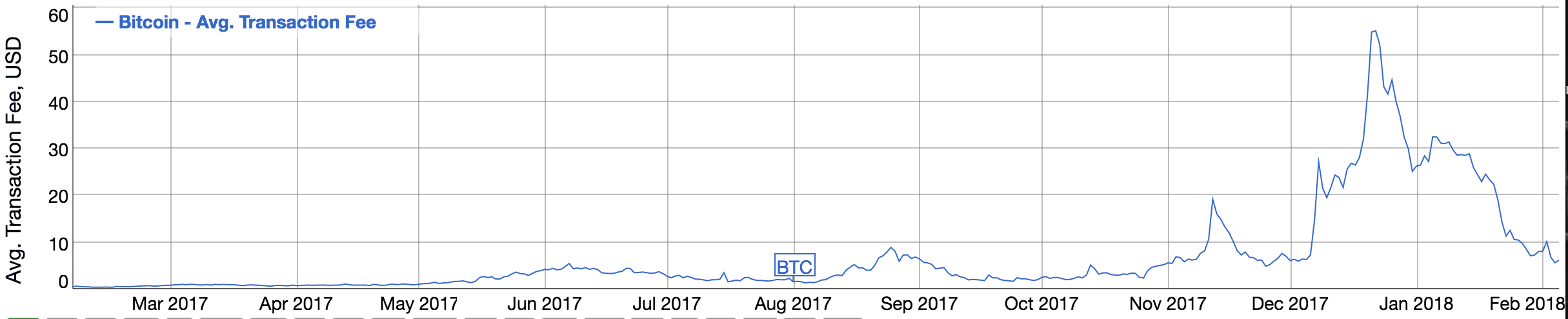

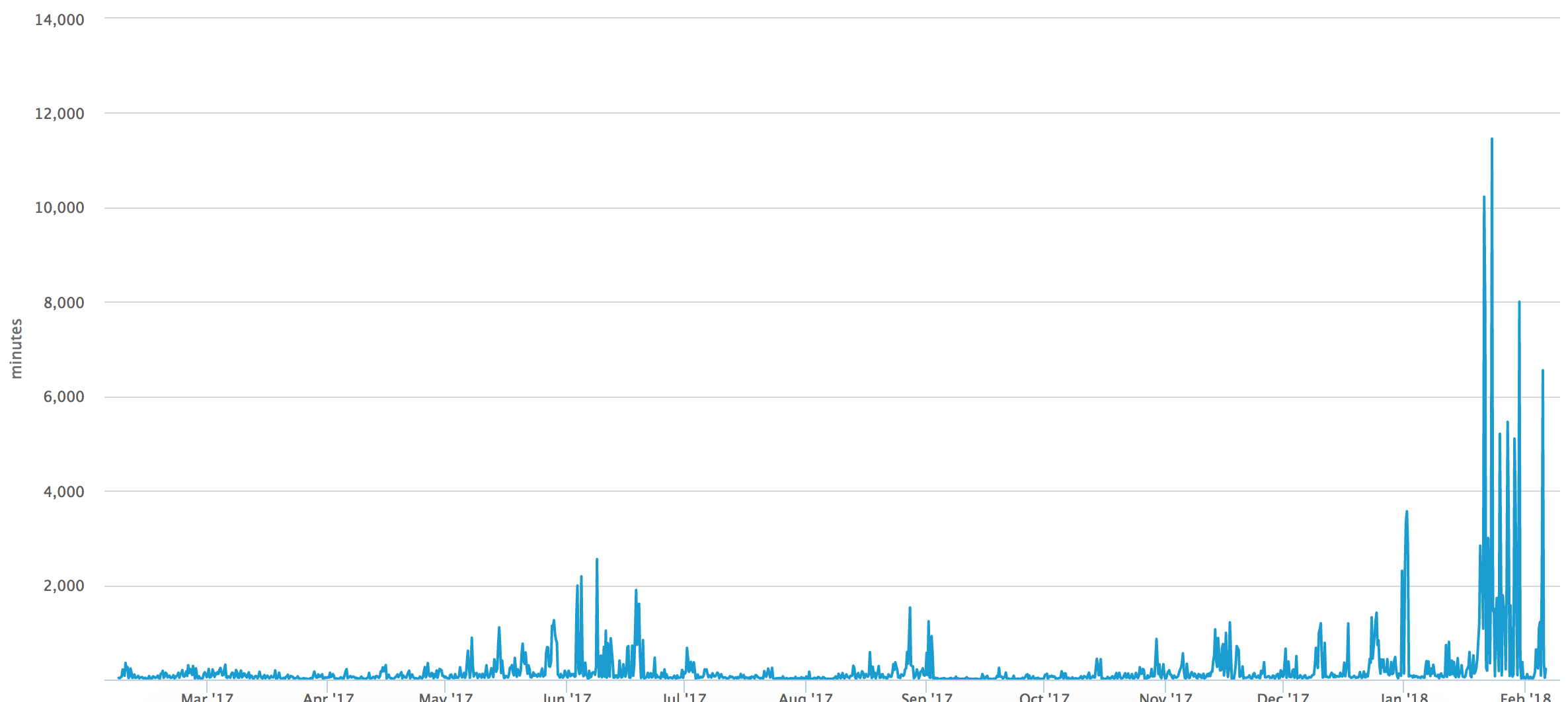

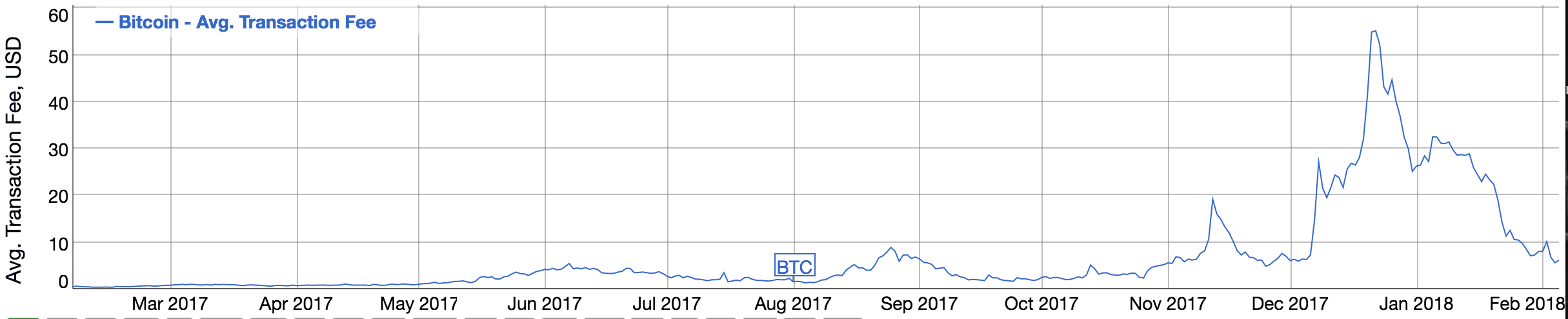

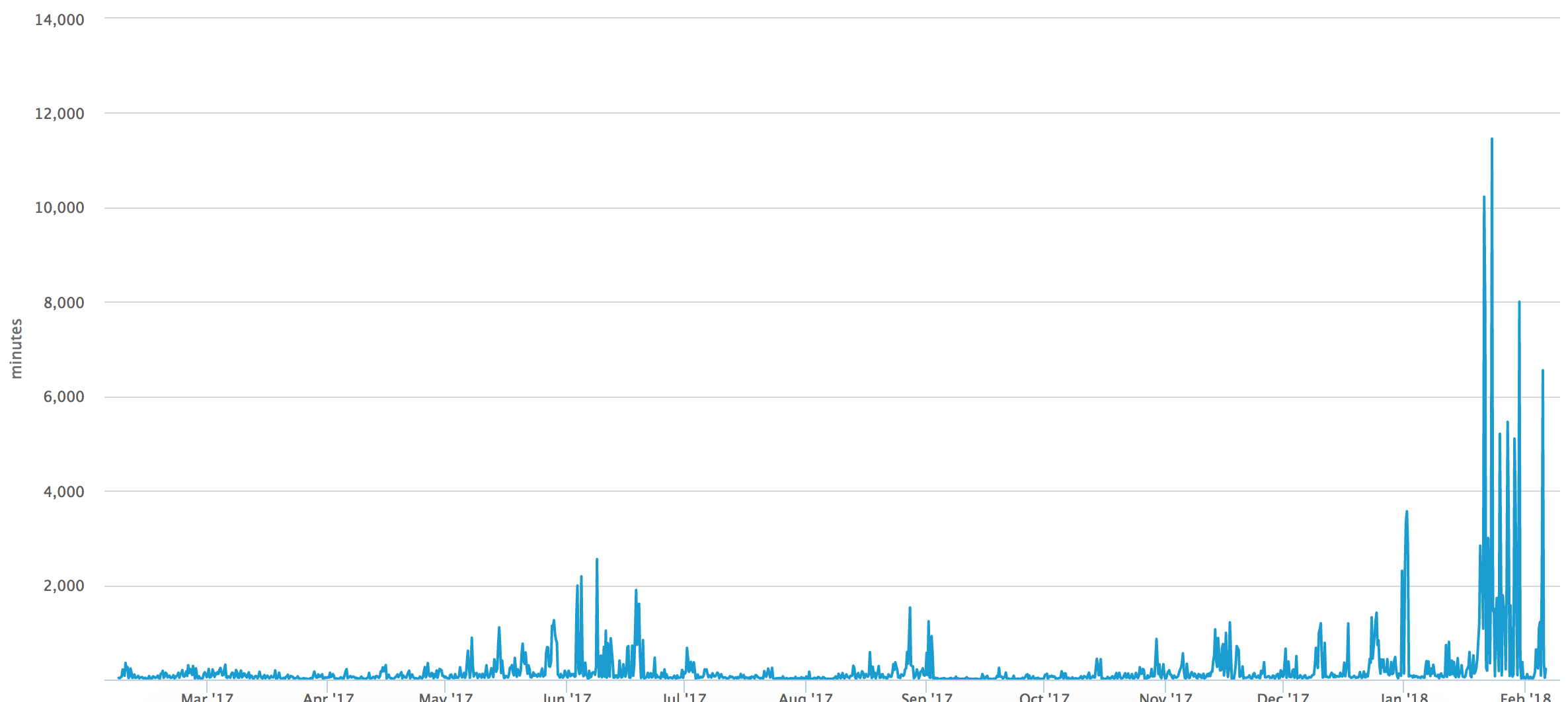

Look at both the transaction fee and the confirmation time for the past year:

https://bitinfocharts.com/comparison/bitcoin-transactionfees.html#1y

https://bitinfocharts.com/comparison/bitcoin-transactionfees.html#1y

https://blockchain.info/charts/avg-confirmation-time?timespan=1year

https://blockchain.info/charts/avg-confirmation-time?timespan=1year

Transaction fees have settled down from the preposterous $40+ range of December 2017, but it is still no less than $5. $5 is not a realistic transaction fee when imagining a world where somebody can buy a can of coke with Bitcoin.

The same goes for the confirmation time of a Bitcoin transaction. Even disregaring the spikes as anomalies, we are still seeing confirmation times well above 100 minutes. Again, a commercial exchange that takes more than an hour just to be confirmed is not practical at all.

What is unsettling is that all of this gets worse as the total transaction volume increases. The decrease in fee & confirmatin time from December 2017 ~ Febuary 2018 come from the decrease in transaction volume between the same period. Once the transaction volume increases again, so will the fees and confirmation time.

I am well aware that many people in and around the Bitcoin community are trying to tackle this, whether they are Hark Forks or UASFs – But the amount of debate and politics around this matter and the difficulty of reaching a clear consensus leaves me with little faith in the matter.

Speaking of politics…

Centralization

The fact of the matter is, Bitcoin is being centralized more and more. The large-scale miners have too much power and influence over the network, not to mention that power increased when multiple miners unite.

Large exchanges and “whales” can also influence the price of Bitcoin, and have influence within the network. This is not the utopian world the people of Bitcoin were dreaming of.

But this may be a little unfair to pin down on Bitcoin. I lately am pessimistic about true decentralizationin general.

The Decentralization Network Paradox

This is what I call the “Decentralization Network Paradox”. It goes like this:

For a given decentralized network,

The more value the network provides for the participants in the network,

the more incentive there is for large entities to control more nodes in the network.

The less value the network providies for the participants in the network,

the less likely it is for the network to gain traction.

The most obvious example of this is the Internet. It was built on a decentralized philosophy, but as the internet gained traction, it started to tilt towards centralization.

The ISPs and network providers are able to charge for the usage of the internet (since they paid upfront for the infrastructure). This was a lucrative opportunity for the early network providers, and many large corporations (including Enron) came in to capture that value.

The result is the world now where a few gigantic network providers control the vast majority of internet connection, and have the ability to restrict what we can and cannot see, and how much money we pay for it.

On the other hand, popular P2P networks such as Bittorrent has not fallen to the victim of centralization (as far as I know), because there is no way for participants of the network to extract value from the network. No value, no incentive to control.

Bitcoin is on the other end of the spectrum, where a tremendous amount of value can be extracted through mining (with the help of the actual Bitcoin value rising). This led to a very quick and sinister centralization of the network.

- The brilliant Vitalik Buterin goes into detail about the definition of decentralization.

As far as I know, it is very difficult to escape the Decentralization Network Paradox. The only way to truly maintain a decentralized network is to refrain from providing tangible value to the participants of the network, but at the same time have them participate in the network.

Bittorrent does this elegantly as the participants of the network are either:

- Actively searching for files

or

- Passively serving a shard of their owned files

The passive serving of files is the crucial point. Participants do not need to do anything special (other than paying for upstream packets, which is currently mostly covered in a fixed internet connection charge) in order to participate and provide value for other participants in the network. Therefore they do not need any explicit value from the network.

Putting the pieces together

It’s hard for me to imagine a truly decentralized, efficient network that people will be practically using as currency, to replace the current fiat currency.

The blockchain technology, as much as it is genius, it requires a very large amount of computing power. The network justifies that computing power by rewarding the participant with actual monetary value. So, based on what I have mentioned above, the blockchain is intrinsically unable to escape centralization.

The current state of Bitcoin, and most cryptocurrencies in general, are a formation of a new financial market, slightly different from traditional securities or commodities markets. There obviously is value produced and gained from a formation of such a market, and it is eye-opening to witness such a large market emerging from thin air in less than 10 years.

Given the above, that does not translate into Bitcoin achieving its original technological and ideological goals since the time of its emergence.